In “What no Hardware RAID?” I got the external enclosure working on my home Linux computer. In this post I describe the problems I had getting it to work in a Dell PowerEdge 6650 server.

Drop the card in

I went down to the data center one afternoon thinking I had a 20 minute job to put a PCI card in a server, connect the enclosure and reboot the server. Well it turned out life is not that easy. No matter what I did, Linux would load the driver for the RAID controller card and it would attempt to find the disks connected to it, but everytime it would fail to find the disks.

Troubleshooting

My first thought was that I screwed up the kernel build. So I rebuild my vanilla kernel (2.6.25) from scratch. Still no success. My next thought was that maybe the server motherboard did not like the version of the firmware on my RAID controller card. That seemed likely since my home computer would not boot with one version of the firmware. So for the next day I tried a myriad of kernel versions and RAID controller firmware revisions and combination of those!

So with several of those combinations exhausted the department sys admin recommended that I try changing the PCI card to PCI slot 1 on the server. That slot was only PCI, while the remaining 7 slots were PCI + PCI-X. I had originally placed the card in slot 8 because it was closest to the location of the external enclosure and 1 meter cable wouldn’t reach if I used slot 1. So for the purposes of the test I set the external enclosure on the floor. I then tested, and the system still did not work so I moved the card back to slot 8 and the enclosure to its shelf. And so went another day of troubleshooting.

Getting more frustrated I begin to accept that maybe this RAID controller is fighting with my PERC SCSI RAID controller in the server. Unfortunately, the test was to remove my RAID card, thus corrupting my internal disks with OS. I eventually got the guts up to do that… still no luck.

Then I read in detail, every user review of these 2 products (the raid controller card and the external enclosure) on newegg.com. In a couple reviews I see people complaining that newegg specified the card as PCI-X when it is actually PCI. I didn’t see that as a problem because I was using PCI + PCI-X slots. Then I reach a review that says the card will only work at 5V despite fitting into a 3.3V PCI slot. Huh?

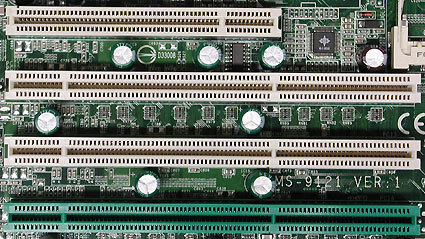

In comes wikipedia. Now I see, PCI-X operates the 3.3V. Past revisions of PCI operate at 5V, a new revision operates at 3.3V. The standard uses different physical ‘notches’ in the bottom of a PCI card to restrict you from inserting it into an incompatible slot. As you see in the figure below the bottom two slots are longer and have differently placed vertical ‘bars’. Those bars indicate what voltage is on that slot. A bar near the left (closer to the edge of the motherboard) indicates 3.3V. A bar near the right (as in the top slot) indicates 5V. The bottom two slots in this picture are PCI-X slots which are what I was using.

Now take a look at the PCI RAID controller again.

You can clearly see there are two ‘notches’ cut into the interface. These notches indicate that it can be inserted into a 3.3V slot or a 5V slot and the card will handle the difference. You can see that the card will also fit into either slot type in the picture above.

Back to the newegg user review. Is it true that the card can only work with 5V even though the card was made to fit into 3.3V slots? A quick email to the manufacturer reveals that the user is correct. The Syba SD-SATA2-2E2I, Silicon Image/SIL3124 card will only work in 5V PCI slots. What a bust! I wasted 3 days trying to make it work in a 3.3V slot. All because the manufacturer incorrectly made the card.

The fix was easy, I went back and put the card back into PCI slot 1. First boot and my hard drives were detected by Linux (CentOS 5.1 with Vanilla kernel 2.6.25)! Yay! Why didn’t this work a few days ago when I tried? I have no clue.

So now I have my Sil4726 enclosure working with my Sil3124 PCI card on Linux!

Pingback: Ryan Burchfield … Seeking blog name » Blog Archive » Authentication

#1 by Dan on July 15, 2009 - 5:21 pm

I have a Shuttle with a PCI Express X16 and a PCI slot. My video card is in the PCI-E slot, so I only have an available PCI slot. I need a PCI SATA II card with port multiplier support. I’m looking at the same card you bought… the SYBA with the SIL3124 Chipset. Here is my motherboard…

http://eu.shuttle.com/en/Portaldata/1/Resources/12_products/xpc_barebones/sp35p2/sp35p2_mb.jpg

The PCI slot is the WHITE slot on the upper right between the yellow IDE and yellow PCI-Express slot. Is this 5V? Will this card work in my motherboard?

I’m running Server 2008 x64. Is there 64-bit driver support? I saw on your other post, that you installed it on Server 2003. But you didn’t mention if it was x32 or x64.

Thanks!

#2 by Ryan on July 15, 2009 - 6:41 pm

I expect the card will work with your motherboard. The notch location in your motherboard PCI slot indicates it is a 5V slot and this is a 5V card. I tested with server 2003 x32. I don’t know about 64 bit driver support. I would recommend looking into that.

#3 by Ryan on July 15, 2009 - 7:30 pm

Just figured I would post a general update here. Its been a year and I’m still running with this exact same configuration, virtually maintenance free. Biggest issue I had was sys admins inadvertently knocking the eSATA cable loose. My OS data is not on these drives so the OS didn’t crash and I just had to plug them back in and remount and we were running again with no reboot. Definitely getting our bang for the buck.

#4 by Simon on October 26, 2009 - 3:23 pm

Thanks for this, I’ve spent 3 days trying to get this to work until I stumbled upon this post. Unfortunately all I have are 3 PCI-X slots, so I have to get a new card, but at least I can stop wasting my time.